Gemini Embedding 2 has been released as a multimodal embedding model (it supports text, images, etc.). Instead of handling each data type separately, Gemini Embedding 2 puts everything into a single vector space for semantic search, classification, clustering, RAG (retrieval‑augmented generation), and similar tasks—making the workflow more unified and less messy. It supports over 100 languages, text up to 8,192 tokens, up to six images (PNG/JPEG) per request, video up to 120 seconds (MP4/MOV), audio, and PDF documents up to six pages.

The model uses “Matryoshka Representation Learning,” which lets you reduce the output dimension from the default 3,072 to 1,536 or 768 (allowing a trade‑off between quality and storage/speed), which is handy for systems that need to store many vectors.

Currently, the model is in public preview through the Gemini API and Vertex AI, with a ready‑made notebook available for testing.

canirun.ai is a handy website that gathers various hardware setups and shows how well they run today’s open‑source large language models. What’s useful is that it provides detailed token‑generation speeds per second, giving you reference data if you’re looking to build a system that’s just powerful enough to run a local model for yourself.

The other day I came across this post on Facebook: https://www.facebook.com/share/p/1DYEgsx5Kh/.

It shows how to create a JavaScript snippet that adds a panel displaying the message list in a ChatGPT web conversation. This is really handy and I hadn’t thought of it, because long messages often require endless scrolling, which gets tiring and hard to follow.

In the article the author only provides a prompt for us to "vibe‑code" it. I tried it and it works quite well, but there are still a few spots that could be improved. Soon I’ll probably set up a GitHub repository and open‑source my code since I find it pretty interesting 😁.

startups.rip is a website that gathers every project that has successfully raised funding from Y Combinator (YC) – a well‑known worldwide venture‑capital firm. It covers many projects from 2005 to today, including both successes and failures, and importantly provides detailed information about each story, the journey, and the reasons behind the outcomes. It’s definitely worth reading.

Both OpenAI and Anthropic provide a free six‑month access to their Pro‑to‑Max service plans for open‑source project maintainers. Anthropic requires a project to have at least 5,000 stars on GitHub or over one million monthly downloads on NPM, while OpenAI’s eligibility criteria are much more general.

If you don’t meet those thresholds, you can still submit a brief “letter of intent” explaining why you’d like the grant. This shows that the companies are willing to listen to open‑source developers’ stories.

From what I’ve seen, the program has drawn quite a bit of criticism. Some community members argue that, since the two giants train their models on open‑source repositories, six months of free access is insufficient. Others defend it, saying six months is relatively generous because many open‑source maintainers don’t make any money from their projects. What do you think?

Codex for Open Source Thank you for everything you ship. Claude Max is on us

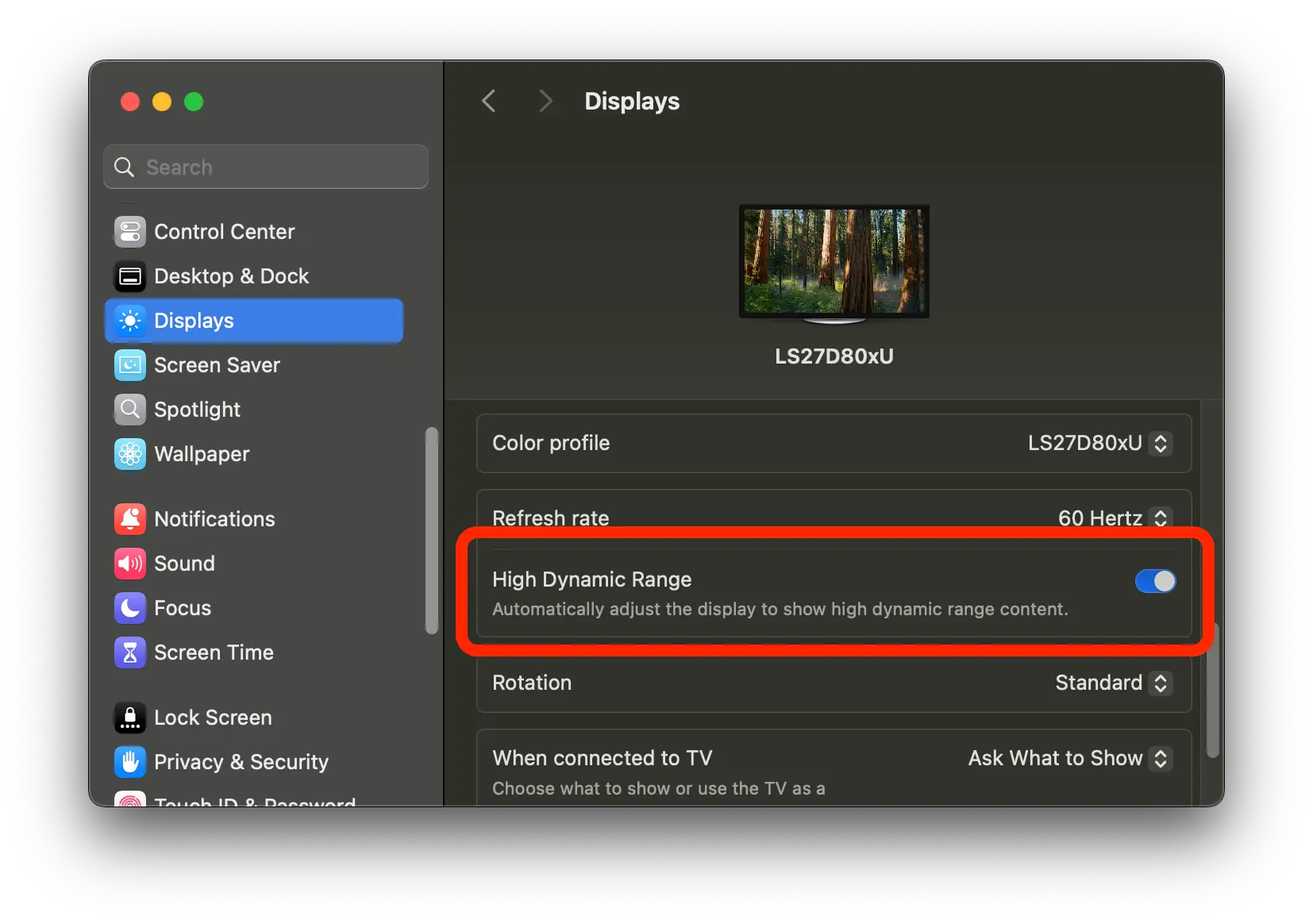

If you’re using macOS with an external monitor, be sure to check whether there’s an option to enable this mode. I tried turning it on yesterday and the colors looked noticeably more vivid. I’ve had the ViewFinity S8 all year; it’s not a bad monitor, but when I plug it in the colors look washed‑out for some reason—still, the difference is clear when I compare it to the MacBook’s screen. Adjusting the monitor’s settings didn’t help either. It turns out the problem was that button; I was being a bit clueless.

These past few days I’ve been setting up additional system alerts for some of the servers I manage.

Monit is a tool that’s been around for decades but is still very powerful. It’s written in C, open‑source, easy to install and configure, and it consumes very little system resources. In basic terms, Monit lets you define alert thresholds; when a threshold is crossed it automatically triggers an alert—such as a process that suddenly disappears, a port that goes down, and many other situations.

Because of its long history, Monit even has a “showcase” page that shows how people around the world use it. See the Real-world configuration examples.

Yesterday afternoon I spent time using AI to create a set of Skills for AI Agents.

If you have ever built a feature in a CMS that runs a workflow or an application, you are surely familiar with the kind of feature requests other teams make. For example, the Marketing team might ask for a report on the effectiveness of a recent campaign, or they might want a feature that sends bulk emails to a specific user group. In those cases we try to develop the feature as efficiently as possible, optimizing it so that the requester can use it without many questions.

The feature I just built is very simple: it takes a list of users, calls an API to update them, and returns a detailed report of successes and failures. I had actually built something like this before, but it was buggy and unusable. After reviewing it, I found it too complicated and costly to rewrite from scratch. Since AI Agents are becoming mainstream and our company is actively adopting AI in our work, I decided to create a dedicated set of Skills for this task. With just one markdown file, a Bash script, and a few prompts, the problem was solved. The usage is straightforward—any tool that supports AI Agents and can read Skills can use it.

This could be the future of CMS operations: no fancy UI, no buttons, everything described in natural language, and the results returned naturally, making it very user‑friendly. In practice, all the power resides in the Bash script, which contains the processing logic. Even without AI Agents you could run the Bash command manually, but adding AI makes it much easier for people who aren’t comfortable with the command line.

Looking further ahead, each CMS feature could be packaged as a set of Skills, with a Bash file (or an even more powerful CLI application) handling the core business logic. A clear output definition means that not only AI but also humans can use it to build additional interfaces if needed. In the past, MCPs were created to fetch data for AI processing, but they were a bit complex, whereas CLIs are more approachable, so developers gravitated toward building CLIs. Nowadays, anything can be built quickly because AI does most of the work; we just describe what we want. The real advantage now lies in experience with system implementation. 😄

Maybe many people could use this. Jina is a research company that offers solutions related to LLM applications. I first learned about it while exploring embedding models for semantic search.

They also provide a range of other tools, one of which converts any article link into Markdown format to be more LLM‑friendly. As you know, the .md format is becoming more popular than ever because it supplies context for large language models to process information. Now you can get article data with a simple API call.

No wonder Cloudflare is so active in fighting AI “scraping” of web content 😃

My first post after the New Year. I took a week off to get my work back on track, and starting today I’ll be posting regularly again.

By the way, I’m building a few agents that use agent skills to serve as assistants for running and growing the blog. It’s still in development, and I’ll share a showcase post when it looks solid enough 😅

Lately I’ve noticed that any long article online often gets tagged as “AI‑generated” and then criticized for having no value. What do you think about this? Do people dislike reading AI‑written articles, or is it just because they’re made by AI 🤔?