Building an MCP Client - Part 2: CLI Client

In this Part 2, we will create a simple client that can interact via a command-line interface (CLI), meaning a command-line application. The input is the user's request, and the output is the application calling the correct function available in the MCP Server and then returning the result. For simplicity, let's use the MCP server 2coffee.dev that we created in previous articles. You can reference the source code at Creating a Simple MCP Server for 2coffee.dev.

Anthropic's documentation includes an article guiding how to create an MCP client utilizing their library @anthropic-ai/sdk. However, this library is compatible only with Anthropic models, so we will not follow that approach but instead take advantage of local LLMs using the tool LM Studio. LM Studio supports some APIs compliant with OpenAI standards, so we can entirely use the openai library as a replacement for @anthropic-ai/sdk.

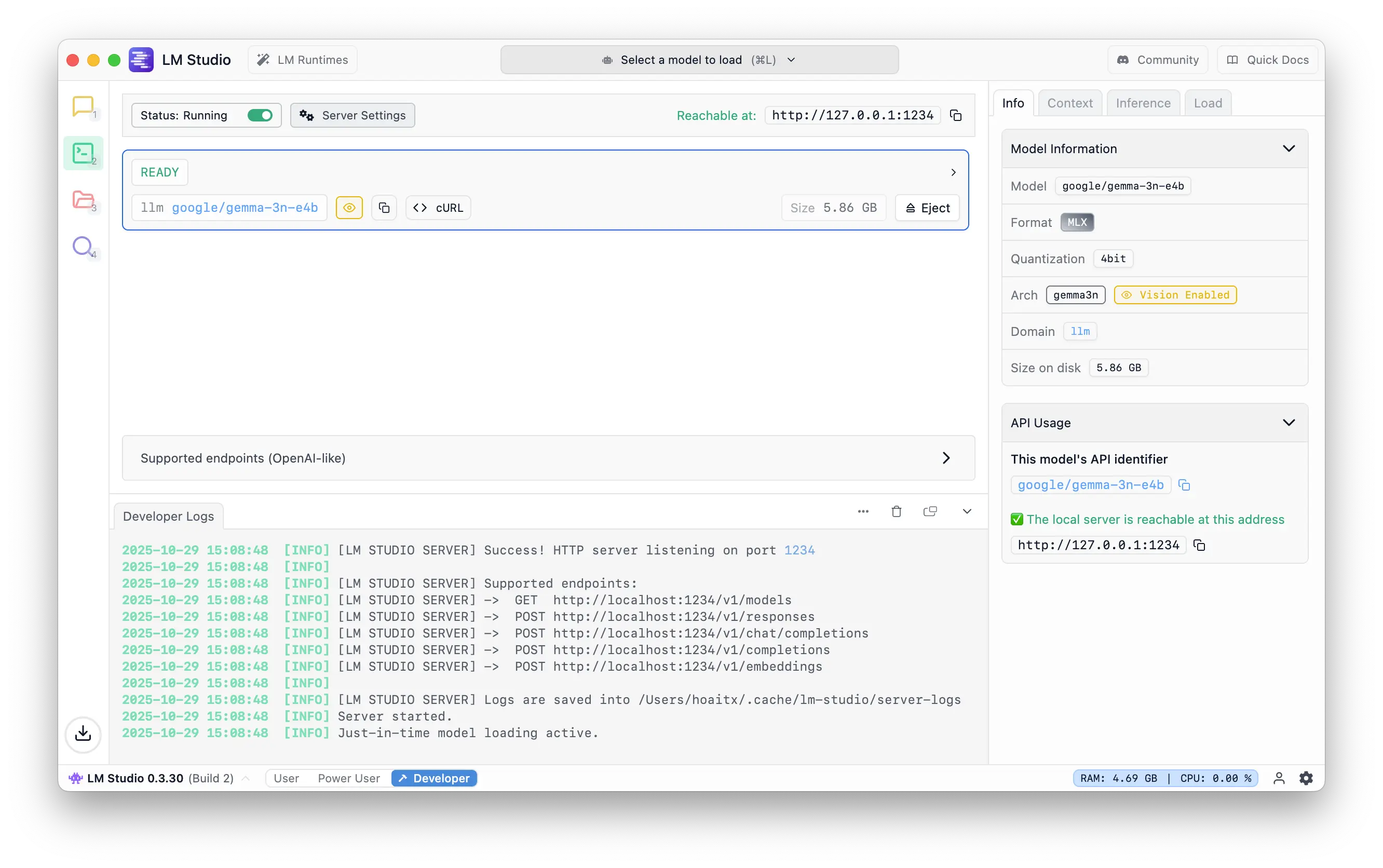

First, start LM Studio, go to Developer, choose a model you like, and start the server. Here, I choose the google/gemma-3n-e4b model.

Next, initialize the connection from the client to the MCP server.

import { Client } from '@modelcontextprotocol/sdk/client/index.js';

import { StdioClientTransport } from '@modelcontextprotocol/sdk/client/stdio.js';

const transport = new StdioClientTransport({

command: 'node',

args: ['build/index.js']

});

const client = new Client({

name: 'mcp-2coffee-client',

version: '1.0.0'

});

await client.connect(transport);

Initialize the connection to the LLMs server.

import OpenAI from 'openai';

const MODEL = 'google/gemma-3n-e4b';

const openai = new OpenAI({

apiKey: process.env.OPENAI_API_KEY ?? 'lmstudio',

baseURL: process.env.OPENAI_BASE_URL ?? 'http://127.0.0.1:1234/v1',

});

Recall the steps for communication between client and server from the previous article. As soon as the user issues a command, send that request to the LLMs server along with the list of tools provided by the MCP server. Wait for the model to respond with a result and decide whether to call tools or not.

Create a function named chatOnce, containing the main logic of the application.

async function chatOnce(userInput: string) {

const first = await openai.chat.completions.create({

model: MODEL,

messages: [{ role: 'user', content: userInput }],

tools: openaiTools,

tool_choice: 'auto',

});

}

With openaiTools being the list of MCP tools. Note that the value of tools must comply with OpenAI's API, so after obtaining the list of tools from the MCP server, the data must be reformatted.

function toOpenAITools(tools: Awaited<ReturnType<typeof client.listTools>>['tools']) {

return tools.map(t => ({

type: 'function' as const,

function: {

name: t.name,

description: t.description ?? t.title ?? '',

parameters: (t.inputSchema as any) ?? { type: 'object', properties: {} },

},

}));

}

const { tools: mcpTools } = await client.listTools();

const openaiTools = toOpenAITools(mcpTools);

After the model responds, if the request matches the tools, proceed to call the MCP server by calling the callTool function from client.

async function executeToolCall(call: any) {

const name = call.function.name;

const args = call.function.arguments ? JSON.parse(call.function.arguments) : {};

const result = await client.callTool({ name, arguments: args });

const content = result.content as any;

return { tool_call_id: call.id, content };

}

const msg = first.choices[0].message;

// If no tool call -> respond immediately

if (!msg.tool_calls || msg.tool_calls.length === 0) {

console.log('[assistant]', msg.content);

return;

}

const toolResults = await executeToolCall(msg.tool_calls[0]);

toolResults at this point contains the result returned by the MCP server. Now you can return that result to the user or take one additional step by having the LLMs respond more naturally using prompt engineering.

const second = await openai.chat.completions.create({

model: MODEL,

messages: [

{ role: 'user', content: userInput },

{ role: 'tool', tool_call_id: toolResults.tool_call_id, content: toolResults.content },

{ role: 'user', content: 'Reply concisely with the title, url, and summary of each article.'}

],

});

Finally, add a few lines of code for CLI interaction.

import readline from 'readline';

const rl = readline.createInterface({

input: process.stdin,

output: process.stdout,

});

rl.question('> What would you like to search for? ', (input) => {

chatOnce(input);

rl.close();

});

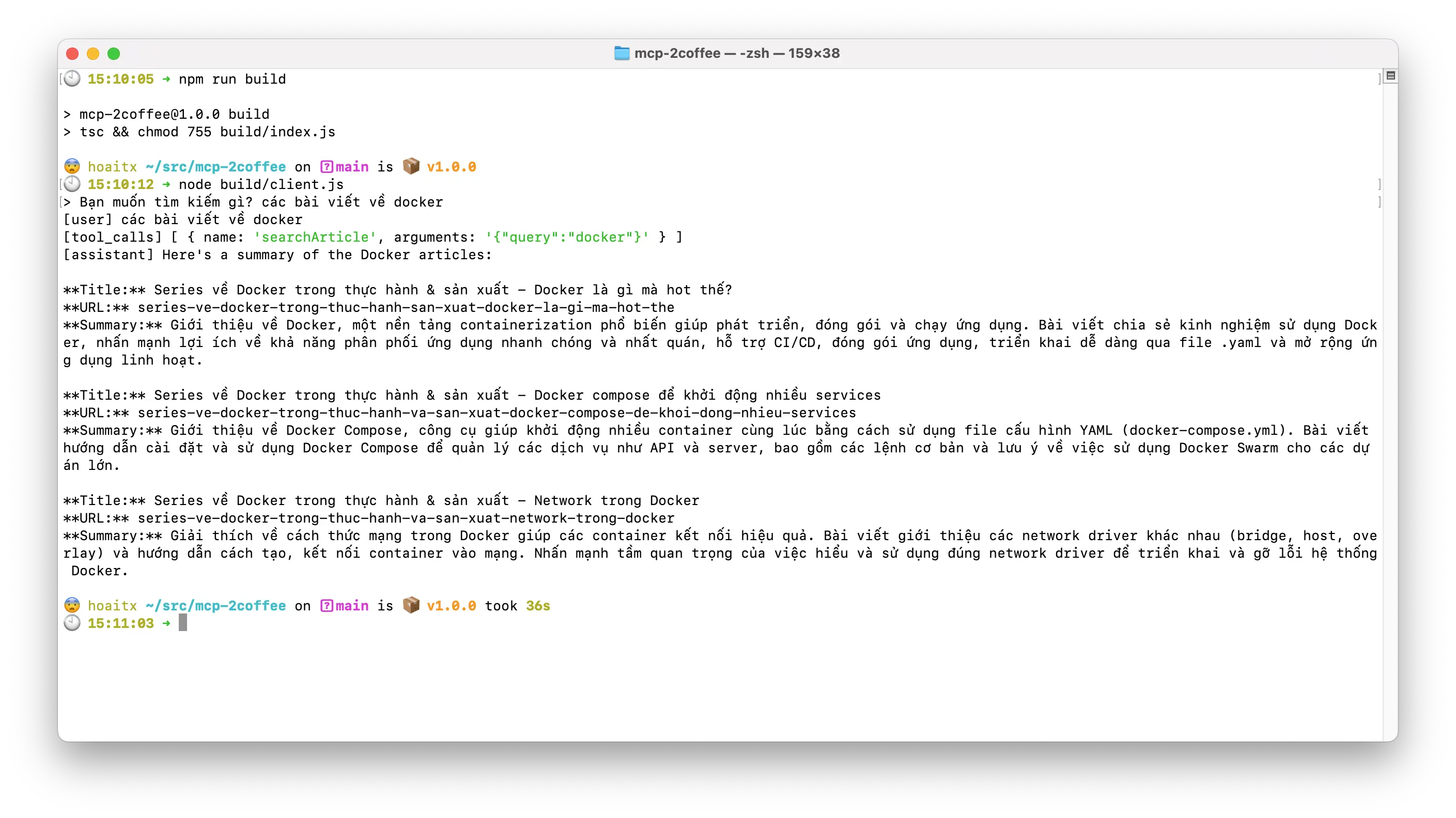

Build the application.

$ npm run build

Run it.

$ node build/client.js

Type something and see the result.

That's it, very simple, isn't it? Hopefully, through this article, readers can visualize the necessary steps to create an MCP client. You can refer to the full source code at Github.